In 2009, a prescient insight was offered by Hal Varian, Google's Chief Economist. He stated that the method of harvesting massive data and extracting value using it would change modern business. Varian was correct in making this statement. Today, data science helps create machine learning algorithms to solve business problems and enable decision-making. For data practitioners, it all starts with creating data pipelines. A primer about these building blocks of data science – the data pipelines.

Running a business in the 21st-century makes hiring a Data Scientist inevitable. If some businessmen do not yet feel this need, they should blame the newness of the data science field, introduced only in 2001 by William S. Cleveland as an extension of statistics.

The benefits of data science are many, and they have all become possible because of data pipelines architecture. They are an important part of data and analytics.

In this article, we discuss what data pipelines are, why they are needed, types of data passing through them, how to create a data pipeline, and the roles played by data engineers and data scientists in their making.

Data pipelines: A briefA data pipeline can be considered a series of steps taken to move raw data from a source to the destination, thereby ensuring handling and consumption of it. The data pipeline is a sum of processes and tools to enable data integration. In the case of business intelligence, the source can be a transactional database, and the destination is mostly a data warehouse or the data lake. The destination is the platform where the analysis of data achieves business insights.

Two major benefits of data pipelines are:

- They consolidate data from various disparate sources into a single common destination. This helps in quick data analysis for the purpose of finding business insights.

- They also ensure consistency in data quality, which is critical for gaining reliable insights.

In a broader sense, two types of data pass through a data pipeline:

Structured data : This category of data can be stored and retrieved in a specific format. This comprises of email addresses, device-specific statistics, phone numbers, locations, IP addresses and banking information.

Unstructured data : This category of data is difficult to get tracked in a fixed format. This comprises of social media content, email content, images, mobile phone searches, online reviews.

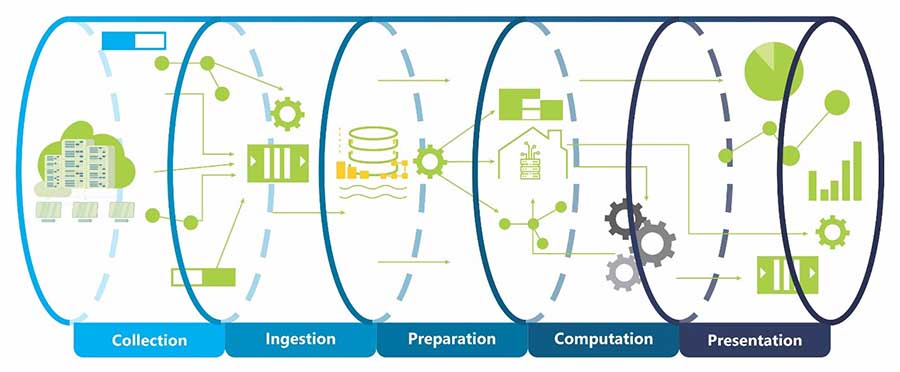

A typical data pipeline

Stages in Data Pipeline

1. Obtaining your dataData is of paramount importance in data science. Without it, you cannot apply data science. Therefore, the first and most crucial part is to get the data. But it cannot be any data. You must obtain ‘reliable and authentic data’. The reason is simple, garbage enters in, garbage moves out.

A rule of thumb is to have strict checks when obtaining the data. All the available datasets (originating from the internal or external databases, third parties, or internet) must be gathered and the data should be extracted into an appropriate format (.CVS, XML, JSON, and many more).

Skills required for obtaining the data

- Distributed Storage: Flint/Apache Spark, Hadoops

- Database Management: PostgreSQL, MySQL, MongoDB.

- Querying Relational Databases

- Unstructured data retrieval: videos, text, documents, audio files.

This part of the data pipeline is very laborious and time-consuming. Many a times, it so happens that the data comes with anomalies, for instance, duplication of values, missing parameters, irrelevant features, etc. It is for these difficulties that cleaning up the data is crucial. The results obtained from the machine learning model is good only if the input is of value. It can be repeated; garbage as input results in the garbage as output.

This step may consider only those aspect of the data which are important to solve the targeted problem. Therefore, a thorough examination of the data as per the further operations to be performed on it can help. The objectives here are error identification, filling the data holes, removing duplicate entries, and removing corrupt records, among others. Expertise in the domain or being thorough in domain knowledge is important to know the impact of any value or feature.

Skills required for cleaning or preparing the data

- Programming language : R, Python.

- Tools for data modification : NumPy, Python libs, R, Pandas.

- Distributed Processing : Spark/Mac Reduce, Hadoop.

At the data exploration phase, the values and patterns of the data have to be explored. In this, you should apply different categories of visualization and statistical tools to support the results. Domain knowledge is essential at this level so that visualizations and their interpretation is correctly understood.

The objective of this stage is to explore patterns by applying visualizations and charts. This would result in feature extraction by applying statistical techniques. This results in the identification and testing of significant variables.

Skills required for visualization or exploration of data

- Python : Pandas, Matplotlib, SciPy, NumPy.

- R : Dplyr, GGplot2.

- Statistical tools : Inferential, Random sampling

- Data Visualization : Tableau

The data is obtained and cleaned in the initial steps, and then the features that are most crucial for a given problem are spotted. This is accomplished by making use of relevant models as a predictive tool. This process results in improving the decision-making capabilities by making them data-driven.

The objective of data modeling is to perform an in-depth analysis that mainly involves creating machine learning models, for instance, an algorithm or a predictive model. This is done to give predictive power to your data.

Once a data model is created with machine learning, it must be tested for error rate, and performance. Another process of data modeling is to perform evaluation and refinement of the created model. This process involves multiple sessions and cycles of evaluation and optimization. This is because any machine learning model cannot be superlative in the very first attempt. This process increases the accuracy by training with new ingestion of data, minimizing data loss, etc.

Methods commonly applied at this stage:

- Logarithmic loss

- Classification accuracy

- Confusion matrix

- F1 score

- The area under the curve

- Mean squared error

- Mean absolute error

Skills required in data modeling

- Machine Learning: Unsupervised or Supervised algorithms.

- Methods of evaluation.

- Libraries for machine learning: Python (NumPy, Sci-kit Learn).

- Multivariate Calculus and linear algebra.

Interpretation of the data entails communicating the findings to the stakeholders. A lack of proper explanation of the findings for the interested parties means whatever tasks a data scientist has performed are of little use. This is the reason why this step is crucial.

Data interpretation first aims at understanding the business goals and then linking them correctly to the data findings. A domain expert may be required for correlating business problems with the findings. They can enable visualization of the findings and help in communicating the facts to the non-technical stakeholders.

Skills required for interpretation of the data

- Domain knowledge of the business.

- Tools for data visualization : D3.js, Tableau, ggplot2, Matplotlib, Seaborn.

- Abilities to communicate : Speaking, presenting, writing, and reporting. Updating the model

Once the model is deployed in production, it becomes increasingly essential to update and revisit your model periodically. The period of updating will be decided on how frequently you receive data or if any new changes are brought in the business.

For instance, consider you are a data professional at a transportation company and the company decides to open up a division for electric vehicles owing to new consumer trends. If your old model does not consider this sector, then you must revisit your model and include data on these new types of vehicles. Nevertheless, if you do not revisit or update your company's model, then the model will fail over time and will not perform as per the requirements. The insertion of new information or features will change the model's performance and keep it relevant.

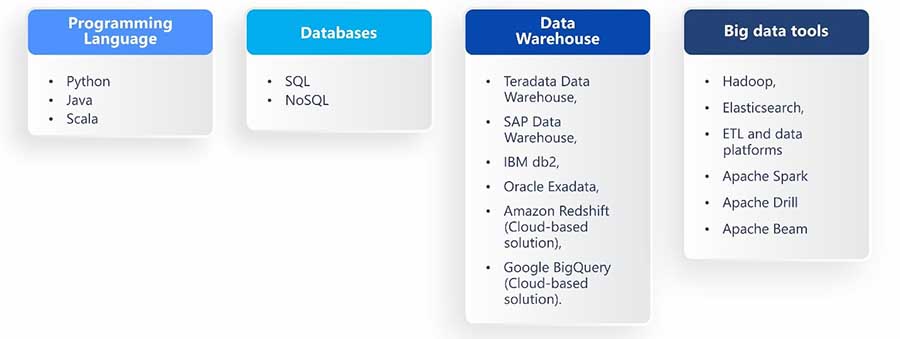

Role of a Data Engineer in creating data pipelinesSKILLS AND TECHNOLOGIES DATA ENGINEERS NEED FOR DATA PIPELINES

In the case of a multidisciplinary team (data engineers, BI users, and data scientists), the role of a data engineer in creating data pipelines is mainly to ensure the availability and quality of data. In addition to this, a data engineer can collaborate with the others in the team to design or implement a data-related product or feature like the refinement an already existing data source, and deployment of machine learning model.

- Data engineers must have a sound knowledge of the programming language, that is, at least Python or Java/Scala.

- Data engineers must know different types of databases (SQL and NoSQL), data platforms, concepts like MapReduce, stream and batch processing, and some basic theory of data itself, for instance, descriptive statistics, data types.

- Data engineers must have experience with several data storage frameworks and technologies, which they can put together to create data pipelines.

- Data engineers must gain proficiency in data warehouse tools, such as: Teradata Data Warehouse, SAP Data Warehouse, IBM db2, Oracle Exadata, Amazon Redshift (Cloud-based solution), Google BigQuery (cloud-based solution).

- Big Data tools that data engineers must acquire know-how of for data pipelines are Hadoop, Elasticsearch, and ETL and data platforms.

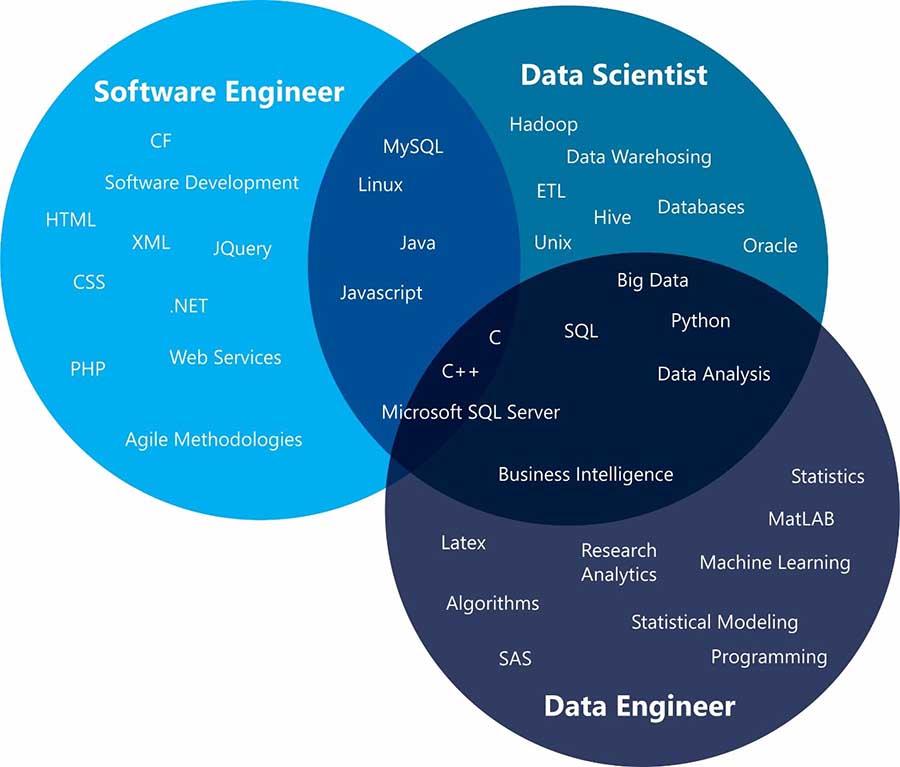

Both data engineers and data scientists perform tasks related to data, but they solve quite a different set of problems. They bring different skills to the table, and make use of different tools.

Source : Ryan Swanstrom

- Data engineers build as well as maintain massive data storage. They apply their engineering skills like ETL techniques, programming languages, database languages, data warehouses.

- On the other hand, data scientists clean and analyze the data, look for insights from the data, deploy models for predictive and forecasting analytics, and often apply their algorithmic and mathematics skills, machine learning tools, and algorithms.

Identification of a business problem and asking relevant questions is important in creation of robust data pipelines. It involves scrouging for reliable and authentic sources, and determining the stages through which the data will pass.

Are you all ready to design and deploy a data pipeline for your organization? The attempt will shine through both hard work and logic flowing in. Step up in your career with DASCA’s Data Engineering certifications! To learn more, check our certifications.